When you send a robot into a burning building, a collapsed mine, or a high-radiation zone, milliseconds matter.

In these situations, latency isn’t just a number, it’s the difference between success and failure, safety and catastrophe.

In the world of robotics, decision latency is the total time it takes for a sensor event, such as detecting a falling beam or a leaking valve, to translate into an action. For robots that operate in hazardous environments, this latency must be measured in tens of milliseconds or less.

Understanding the Problem: Latency in Cloud-Connected Robots

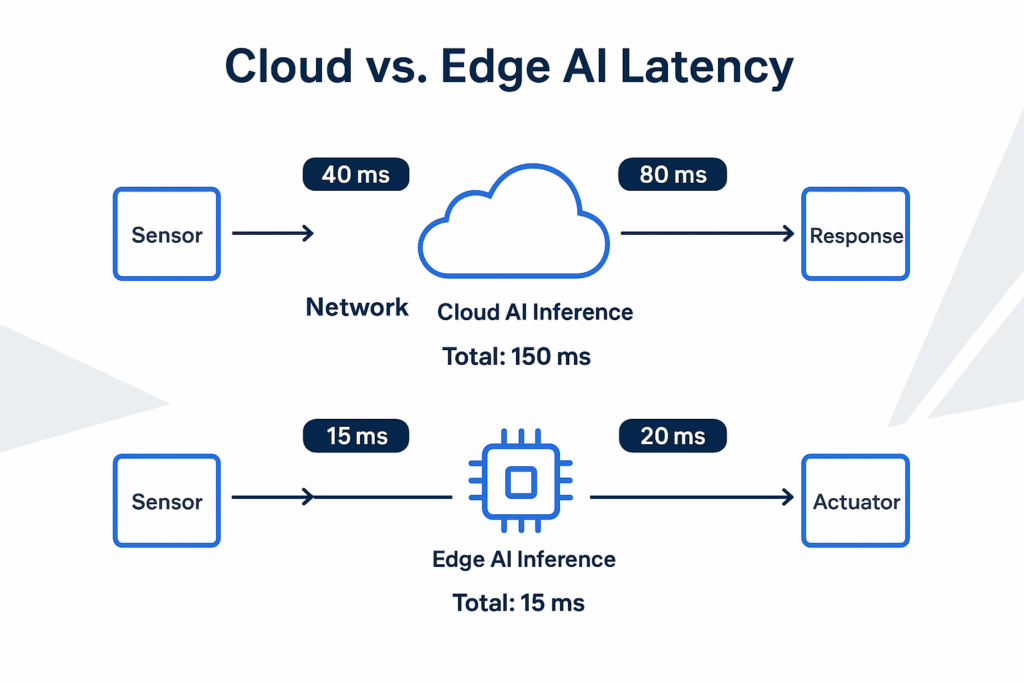

Most robotic systems today are designed around a centralized model: sensors capture data, send it to the cloud, an AI model processes it, and then the response is sent back to the robot.

On paper, this seems efficient; cloud AI offers powerful processing capabilities, massive datasets, and centralized control.

But in practice, this architecture introduces significant time delays that make it unsuitable for real-time control in hazardous conditions.

Here’s what those delays look like in real terms:

Breaking the entire process out into each component:

| Stage | Typical Time (ms) | Description |

|---|---|---|

| Sensor capture & transmission | 2–5 ms | Time for the robot’s sensors to send raw data to the network interface |

| Network routing to cloud (4–6 hops) | 30–50 ms | Even within telecom-controlled networks (like Verizon/Bell), practical round-trip times average 40–50 ms |

| AI inference in cloud | 20–100 ms | Depending on the neural model size and image resolution (e.g., ResNet or YOLOv8 processing multiple frames) |

| Response transmission back to robot | 20–30 ms | Network return latency |

| Total decision latency | 70–180 ms | Cumulative impact before the robot even begins to act |

Now, compare that to a robot with onboard (Edge) AI inference:

| Stage | Typical Time (ms) | Description |

|---|---|---|

| Sensor capture | 2–5 ms | Local to the hardware |

| Onboard inference (with Coral TPU or Jetson Nano) | 10–20 ms | Optimized local model execution |

| Motor actuation | 1–2 ms | Direct local control |

| Total decision latency | 13–27 ms | Immediate response loop—up to 10× faster than cloud-based models |

Why AI Edge Matters for Hazardous Robotics

In hazardous robotics, for example, underground inspection, firefighting drones, and chemical plant maintenance, conditions can change faster than cloud-based AI can respond.

A 40 ms delay might not seem long, but at a drone speed of 10 m/s (36kph), that’s 40 cm of flight before correction, far enough to clip a wall or miss a detection.

Beyond speed, network reliability in these environments is unpredictable. Structural obstacles, electromagnetic interference, and even heat gradients can cause connectivity drops.

Beyond the performance improvements, an edge-based system provides autonomy when connectivity fails.

Case Example: Autonomous Inspection Robots

Imagine an inspection robot navigating a refinery to detect gas leaks or structural defects.

Its onboard camera captures thermal and visual data. Locally trained neural networks (e.g., MobileNet or YOLOv8 Nano) identify anomalies in real time.

If AI inference were cloud-based, even a 150 ms total delay could mean the difference between identifying a small leak and missing a rupture.

By running inference on-device, the robot can stop, alert operators, and contain risk in less time than a blink of an eye.

Even with advanced mobile infrastructure, such as cloud nodes embedded within telecom networks, measured latencies remain around 40–50 ms in ideal conditions.

This demonstrates that physical distance, routing layers, and shared network load still introduce unavoidable delays.

Edge AI in Manufacturing: The Same Logic Applies

Edge AI isn’t just for hazardous fieldwork.

In modern manufacturing lines, where robotic arms operate near human workers, safety interlocks and collision detection systems also depend on sub-30 ms response times.

Cloud AI simply cannot guarantee deterministic response times in those environments.

Edge inference—running locally trained, lightweight neural nets on embedded accelerators—ensures that human safety remains uncompromised.

The Bigger Picture: A Hybrid Future

While cloud AI remains essential for large-scale data aggregation, model retraining, and analytics, the decision loop must move to the edge.

The future is a hybrid model:

- The cloud trains and refines.

- The edge acts and learns locally.

- Together, they balance intelligence and autonomy.

Edge AI transforms robotics from being connected to being aware.

Final Thoughts

In robotics, milliseconds define outcomes.

Whether it’s saving a worker from harm, detecting a gas leak, or preventing a collision, latency is not a luxury metric, it’s the heartbeat of autonomy.

Edge AI ensures that robots can make split-second, context-aware decisions without waiting for the cloud to think.

That’s not just an optimization it’s survival engineering.

Author’s Note:

This article is part of an ongoing series exploring the intersection of AI, IoT, and Edge Computing.

Future articles will examine how hybrid learning models and real-time federated updates will redefine autonomy in both industrial and consumer applications.